Whoa!

I got snagged on this problem last month while juggling three trades across two L2s and a liquidity pool that kept re-pricing. My instinct said “just hit send” because momentum matters. But something felt off about the gas estimates, and I paused. Seriously? Yep—pausing saved me from a failed sandwich attack and a surprise approval that would have left funds exposed. Initially I thought speed was the only edge in DeFi, but then realized reliability and foresight matter more, especially when slippage and MEV are breathing down your neck. Okay, so check this out—this piece walks through using a wallet that simulates transactions, inspects dApp integrations, and helps you reason about risk before you commit on-chain.

Here’s the thing. DeFi has matured. Protocols have wild composability. Medium-level users now chain three or four contracts in one user flow. That’s powerful, but fragile. If any call reverts or returns an unexpected result, you can lose gas, time, and sometimes funds. On one hand composability unlocks innovation. On the other, it amplifies failure modes that used to be rare. Though actually, wait—let me rephrase that: composability is both the gift and the risk of DeFi, and a wallet that helps you simulate changes that trade-off into safer behavior.

Transaction simulation isn’t a gimmick. It’s a practical step with immediate payoffs. Think of it like a test-run: you can see whether an attempted bundle would revert, how much calldata costs, and whether a change in block state (like reserve shifts) would tilt your slippage unexpectedly. I’m biased, but wallets that bake transaction simulation into the UX cut down on failed txs by a lot—very very important for active traders. This is not just about convenience; it’s a security and efficiency move.

Why simulate? The short, messy truth

Hmm… gas is volatile. Transactions race. Bots ambush. Simulation gives you a preview. It shows returns, potential reverts, and the exact state the EVM would see, based on a snapshot. Medium traders often forget that a failing transaction still costs gas. So the core value is simple: waste less gas, avoid surprises, and make smarter decisions on-chain. On a deeper level, simulation exposes implicit approvals, allowance escalations, and slippage sensitivities in composed calls—things UX buttons often hide behind “Confirm” screens.

Now, simulation is only as useful as the model it runs against. If a wallet simulates using a stale RPC, or ignores pending mempool state and veiled front-running, the result may be optimistic. On the other hand, using advanced nodes or infrastructural tooling that traces state changes—those give richer truth. On one hand this sounds technical and boring. On the other, that tooling saves you from somethin’ costly. I’m not 100% sure every user needs the deepest trace, but the folks building frequent strategies do.

What a quality wallet should offer (and what to demand)

Short list first. You want: local transaction simulation, batched execution preview, allowance management with granular approvals, gas estimation that accounts for current mempool congestion, and a visual diff of state changes when possible. Longer thought: integration with hardware wallets, support for multiple networks and rollups, and ability to sign meta-transactions or replace txs (speed up/cancel) without losing composability. These features combine to give both power and safety.

Practical features matter. For example, granular approvals—approve exactly the amount or use permit signatures—minimize rogue spending and reduce long-term risk. A wallet that warns you when a dApp requests “infinite allowance” or shows the exact call data being sent (so you can audit it faster) gives you a huge edge. Some wallets still hide the calldata. That bugs me. I like when the UX prioritizes meaningful transparency over shiny animations.

Also: transaction simulation that can run against a user-selected block number, or simulate the mempool, helps for arbitrage or liquidation strategies. You can see whether a multi-step swap will actually route through the pools you expect, and whether a small delay will flip profitability. That kind of forward-looking tooling isn’t fluff. It’s operational clarity.

How dApp integration should behave

Good dApp integration does three things: it requests minimal permissions, it uses standardized signing flows, and it surfaces simulation results to the user. If a dApp asks to bundle multiple approvals or chain several interactions, a good wallet should offer a simulated dry-run and show a human-readable summary of what each step does. It’s plain common sense. I’m biased towards wallets that let you inspect each call in a decomposed way, with optional collapse for experienced users.

On the flip side, bad integration hides steps. It layers a UI flow that says “Sign to continue” and expects the user to trust. That’s where frontline exploits live. My instinct said trust less, verify more. So, when a dApp integration feels too opaque, I pull up the raw transaction and run a simulation. If I don’t get a clear result, I step back.

Simulator accuracy: what to watch out for

Accuracy depends on the node and the tracing layer. Simple eth_call against a fresh state snapshot is useful, but it can’t predict things that depend on mempool sequencing or off-chain oracle updates. More sophisticated simulation layers incorporate bundle simulation and MEV-aware models. Those give you perspective on front-running risk. They’re not perfect, of course. Prediction is probabilistic. Still, better models narrow surprises.

Also, testnets lie to you sometimes. Never assume testnet behavior equals mainnet. Liquidity distribution, keeper bots, and oracle feeds are different. Use simulation as a guardrail, not a guarantee. On the other hand, combining local simulation with defensive on-chain primitives—like slippage caps or time-locks—gives you a robust playbook for risky operations.

UX patterns that actually help (not just shiny bits)

Design for panic. When a user sees red (a revert risk, a huge allowance), they should have a one-click mitigation—reduce allowance, split the tx, or cancel with a replace-by-fee. Also helpful: transaction diffs that highlight changes to token balances, approvals, and transfer recipients. That little visual diff is worth more than a thousand lines of logs when you’re under time pressure.

Another practical thing: let users simulate multiple scenarios with minor parameter tweaks. Want to test slippage at 0.5%, 1%, and 2%? Run three sims and compare results. That small iteration loop helps you decide whether a trade meets your execution criteria without wasting on-chain gas. It’s like rehearsing a complicated move in chess before executing it in blitz time.

Pro tip: integrate hardware wallet signing into the flow so the final sign-off happens on-device with clear context. The best wallets let you see the simulated result, then push the exact calldata to your hardware device for human verification. That separation of concerns reduces phishing risk enormously.

Where the ecosystem is heading—and where wallets fit

DeFi is getting more layered. Rollups, account abstraction, and sequencer models will change signing flows. Wallets need to evolve in parallel: simulation on the client, richer mempool visibility, and safer default permissions. I think wallets that bake in education—small contextual nudges that explain “why this allowance is dangerous”—will win trust. That matters in the U.S. market where users expect both power and consumer protections.

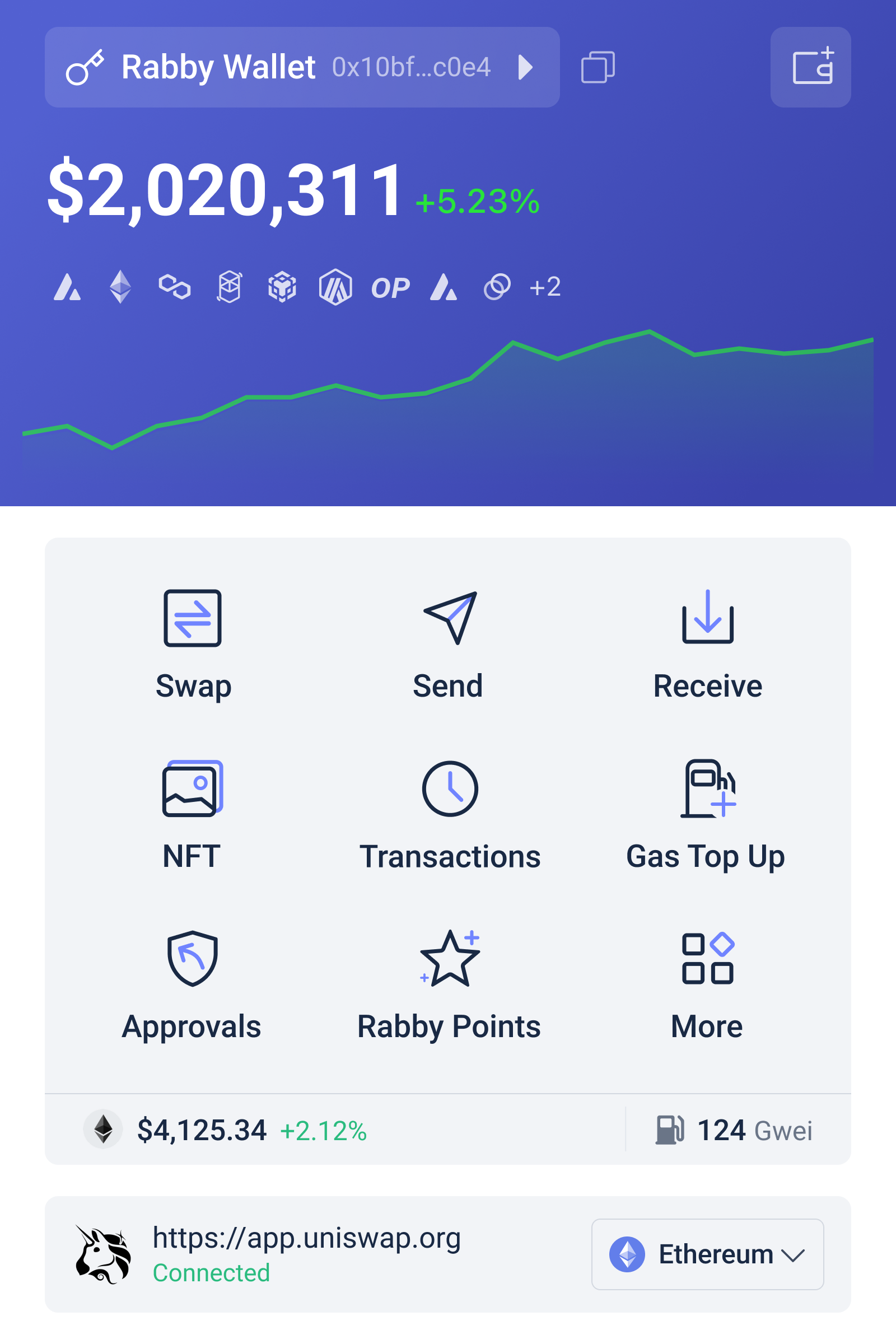

Okay, so check this out—I’ve been trying different wallets and integration setups, and one that consistently impressed me with simulation-first UX and granular permission controls is rabby wallet. It’s not perfect. No product is. But I appreciate the emphasis on transaction preview, allowance control, and network management. If you’re building or trading across DeFi stacks, it’s worth trying.

FAQ: quick answers to common concerns

Does simulation prevent all failed transactions?

No. Simulation reduces the risk but doesn’t eliminate it. It helps catch logical reverts, estimate gas, and expose allowance issues. It can’t perfectly predict mempool ordering or sudden oracle shocks. Use it as a guardrail, not a guarantee.

Is simulation slow or expensive?

Simulation usually runs locally or via a node and is free for users. Some advanced bundle simulations use paid infra, but most useful simulations are immediate and low-cost. The time you save by avoiding failed txs typically outweighs the milliseconds spent simulating.

How does simulation affect UX for beginners?

Good wallets hide complexity while offering optional detail. For beginners, simulation can be a behind-the-scenes safety check. For power users, it becomes a control panel. The trick is progressive disclosure—show what matters, while letting advanced users dig deeper.